Gaussian Mixture Model (GMM)

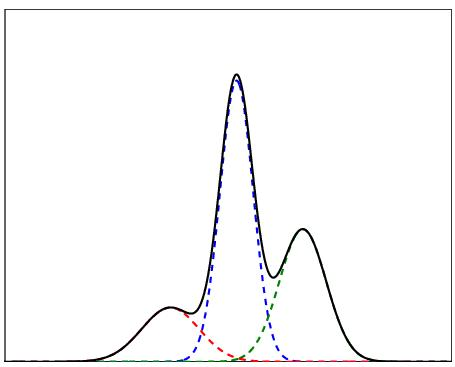

A Gaussian mixture model (GMM) is a probabilistic model that represents a population as a mixture of multiple Gaussian distributions, each with its own parameters (mean, variance, and mixing weight).

where:

- is the mixing weight for component (with )

- is the -th Gaussian component

Intuition

Generative story: first roll a -sided weighted die (weights ) to pick which cluster a point comes from, then draw the point from that cluster’s Gaussian. From the outside you only see , not the die roll. The GMM is K-means with soft edges and ellipsoidal (not spherical) clusters: points near a boundary belong partly to each cluster, and a cluster can be stretched or rotated because it has a full covariance, not just a center

Log-likelihood

For i.i.d. datapoints, the observed log-likelihood is

This form is hard to maximize directly, which motivates EM. The issue: of a sum doesn’t factor, so the derivative with respect to mixes in all the other components. If we could peek at which cluster generated each point, the would sit inside the sum and each component’s MLE would decouple into “just fit a Gaussian to my points”. That peek is exactly what EM fakes with responsibilities.

Resources

How to think about this

Mixture models generalize k-means clustering to incorporate information about the covariance structure of the data as well as the centers of the latent Gaussians.