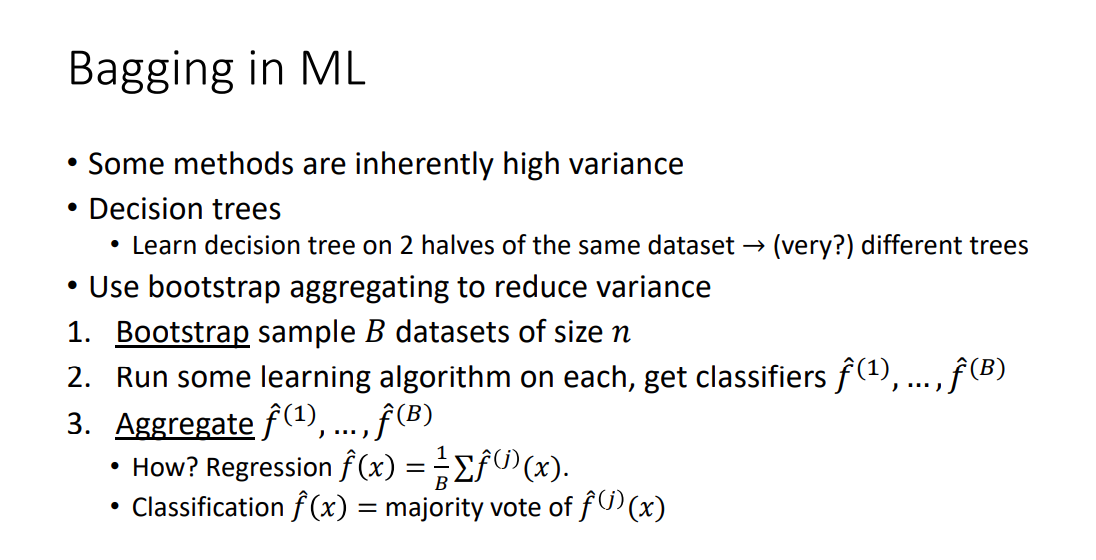

Bootstrap Aggregating (Bagging)

Bagging is bootstrap sampling plus aggregation: train many copies of a high-variance base learner (like decision trees) on resampled datasets and average their predictions to reduce variance.

Bootstrap sampling intuition

Averaging predictors trained on independent datasets of size reduces variance by a factor of . We don’t have independent datasets, so we cheat and resample with replacement from the one we have. Not truly independent, but works well in practice.

The Variance Argument

Suppose we want to estimate given . Using the empirical mean :

If we had points, we could form disjoint sets of size , compute for each, and average:

where:

- is the number of disjoint subsets

- is the empirical mean on subset

Intuition

Averaging independent noisy estimates cuts variance by : the errors in different copies point in random directions and cancel, while the signal adds. The trees don’t need to be good, they need to be diverse and uncorrelated. Bagging’s whole job is to manufacture that diversity from a single fixed dataset.

Variance drops by factor , but we needed more data.

Bootstrap Sampling

Given a dataset of size , create datasets of size by drawing samples with replacement from the original.

Example: from :

Each bootstrap sample contains ~ of the unique original points. The remaining ~37% “out-of-bag” points give you a free held-out set per tree: you can evaluate tree on the points it never saw.

Because the samples aren’t independent, the variance doesn’t literally drop by , but empirically it still drops substantially.

Algorithm

- Bootstrap-sample datasets of size

- Train a classifier on each

- Aggregate:

- Regression:

- Classification: majority vote of

Where Bagging Helps

Bagging helps most with high-variance base learners. Decision trees are the classic example: tiny data perturbations produce very different trees. Bagging low-variance learners (like a well-regularized linear model) gains little.

Random forests extend bagging on decision trees with feature subsampling at each split, decorrelating the trees further.

Bagging vs Boosting

- Bagging: parallel, reduces variance, uses the full (bootstrapped) data on each learner

- Boosting: sequential, reduces bias, reweights data to focus on mistakes

Slides: http://www.gautamkamath.com/courses/CS480-fa2025-files/lec8.pdf