Decision Tree

A decision tree is a hierarchical classifier (or regressor) built by recursively splitting the feature space on one variable at a time.

- Simple, interpretable, but high-variance

- Often combined via Bagging or Boosting for better performance

Building a Tree

- Start with one node (the root) containing all data

- Recursively split each leaf: choose a variable and threshold , partition points into and

- Stop based on some criterion (see below)

- Predict by walking down the tree from root to leaf and returning the leaf’s majority label (classification) or mean label (regression)

Which Split?

Choose a node loss that is small for pure nodes (all same label) and large for mixed ones. Pick the split that minimizes the weighted sum of child node costs.

where:

- are the left/right partitions at threshold on feature

- is the node loss measuring impurity

Intuition

Each split is a yes/no question. You want the question that carves the data into the cleanest piles: ideally “all cancer” on one side, “all healthy” on the other. The weighted sum is there because a tiny-but-pure child shouldn’t outvote a large mixed one. Big children matter more.

For each feature , you only need to try thresholds (between sorted values of ).

Node Loss Functions (Classification)

Let be the fraction of class in node , and .

- Misclassification loss: (fraction you’d get wrong if you guessed the majority)

- Entropy: (this is Shannon entropy)

- Gini index: (probability two random draws from the node disagree)

Intuition

Entropy is how surprised you’d be by a random label drawn from this node. A pure node, no surprise. A 50/50 node, maximum surprise. Splitting to reduce entropy = asking the most informative question next. Gini is almost the same idea with a different curve: the chance that two people picked at random from this node would disagree on the label. Misclassification loss is flat in the middle, so it can’t tell a 60/40 split apart from a 51/49 one; entropy and Gini curve more, so gradients flow and splits get chosen more sensibly.

Entropy and Gini are differentiable and tend to produce more balanced splits than misclassification.

Regression loss: , where is the mean label in .

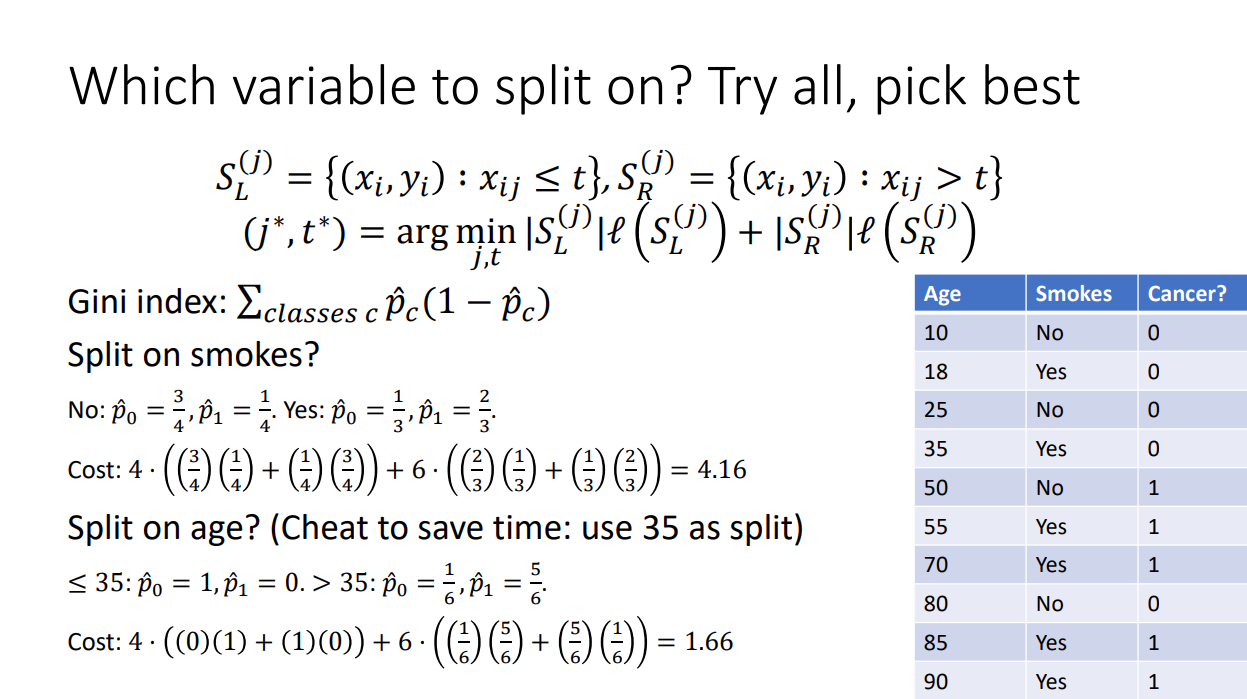

Example: Gini Split

| Age | Smokes | Cancer? |

|---|---|---|

| 10 | No | 0 |

| 18 | Yes | 0 |

| 25 | No | 0 |

| 35 | Yes | 0 |

| 50 | No | 1 |

| 55 | Yes | 1 |

| 70 | Yes | 1 |

| 80 | No | 0 |

| 85 | Yes | 1 |

| 90 | Yes | 1 |

Split on smokes? No (4 pts): . Yes (6 pts): . Weighted Gini cost:

Split on age > 35? (4 pts): all 0. (6 pts): . Cost: → age wins.

Stopping Criteria

- Max depth reached

- Few examples in a leaf (e.g., )

- Leaf is homogeneous (pure)

- Split improvement is below a threshold

- Running time budget exhausted

Pruning

Alternative to early stopping: grow the tree fully, then prune. Choose the subtree that minimizes

where:

- controls the tradeoff: keeps the full tree, collapses to a single node

Growing first then pruning lets the tree see useful splits that only pay off a few levels down, which a greedy early-stop would miss. Pick via validation.

A decision stump is a 3-node tree (one split). Very weak on its own but useful as the base learner in AdaBoost.

Pros / Cons

- Interpretable, easy to visualize

- Handles mixed feature types, no need to normalize

- Can model nonlinear (axis-aligned) boundaries

- High variance: small data changes produce very different trees

- Struggles with boundaries that are not axis-aligned (e.g. a diagonal line)

- Tends to overfit without regularization/pruning

Improvements:

- Bootstrap Aggregating (Bagging): average many trees on bootstrap samples

- Random Forest: bagging + random feature subsets at each split

- Boosting (AdaBoost, Gradient Boosted Trees)

Slides: http://www.gautamkamath.com/courses/CS480-fa2025-files/lec7.pdf