Greedy Algorithms, Dynamic Programming

Interval Scheduling

I really like the notes by Lapchi on this. Very intuitive.

https://cs.uwaterloo.ca/~lapchi/cs341/notes/L11.pdf

Don’t focus on the algorithm, but understanding rather why it is correct. And then, be able to come up with the algorithm fast.

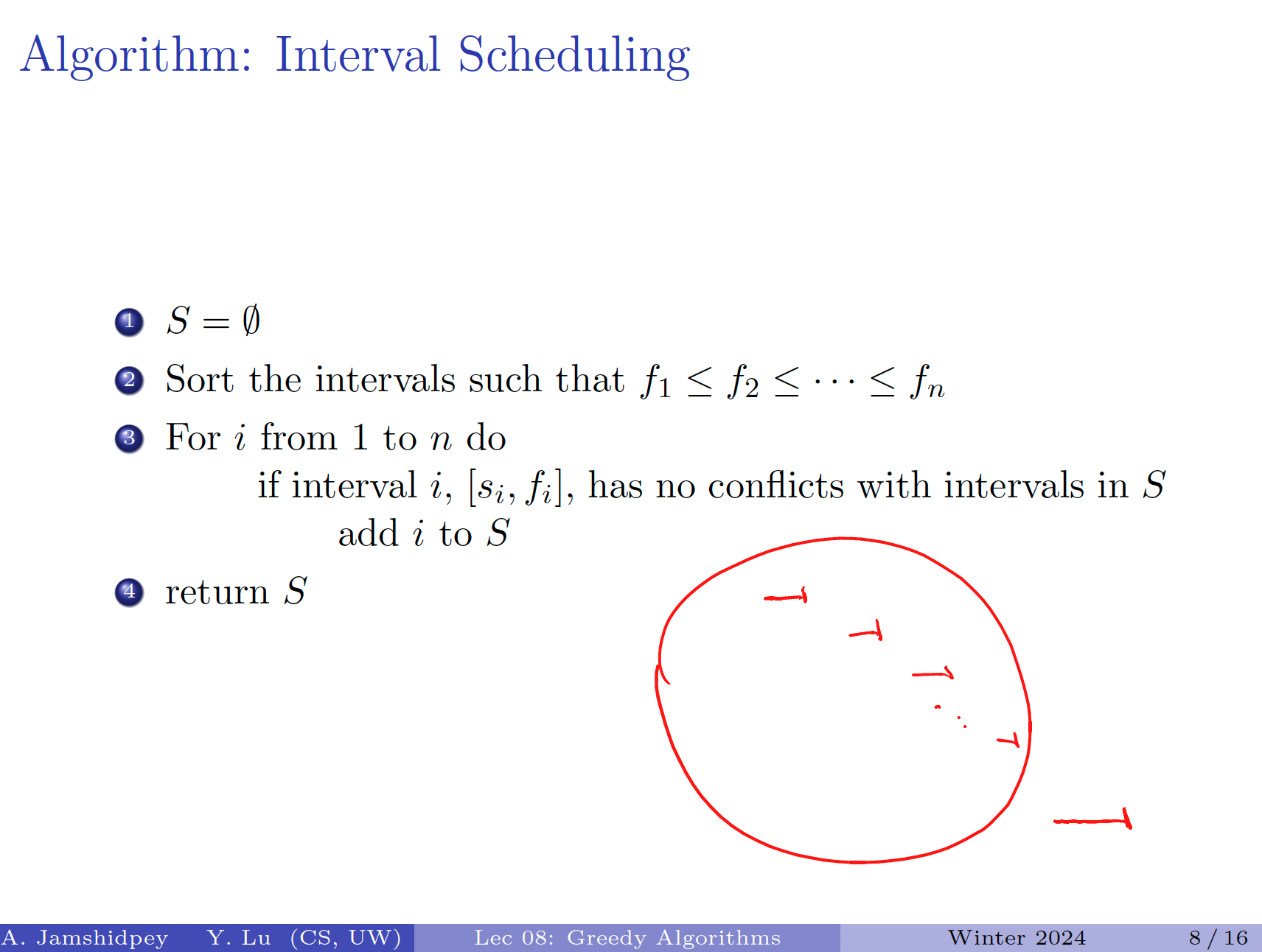

Interval Scheduling

Interval Scheduling Problem

Input: intervals . Output: a maximal subset of disjoint intervals.

There are a few greedy ideas that don’t work:

- Choose earliest starting time

- Counter-example: [1,10], [2,5], [6,8]

- Choose shortest intervals first

- Counter-example:

- Choose intervals with the minimum number of overlaps first

- Counter-example:

The proof here is by assuming an optimal solution , and comparing to the output set produced by the greedy algorithm .

And then assume that .

Let be an index into , and be an index into .

At , (since the greedy algorithm takes interval with the earliest finish time)

Inductive hypothesis: Therefore,

So is an interval that is considered by the greedy algorithm. On the next interval that it selects, it’s either this interval, or an even earlier one.

Now, let be the last interval that is in and . We have Since O is bigger, there must exist the interval . we know that

However, we know that So this interval must be considered by the greedy algorithm. This. is a contradiction.

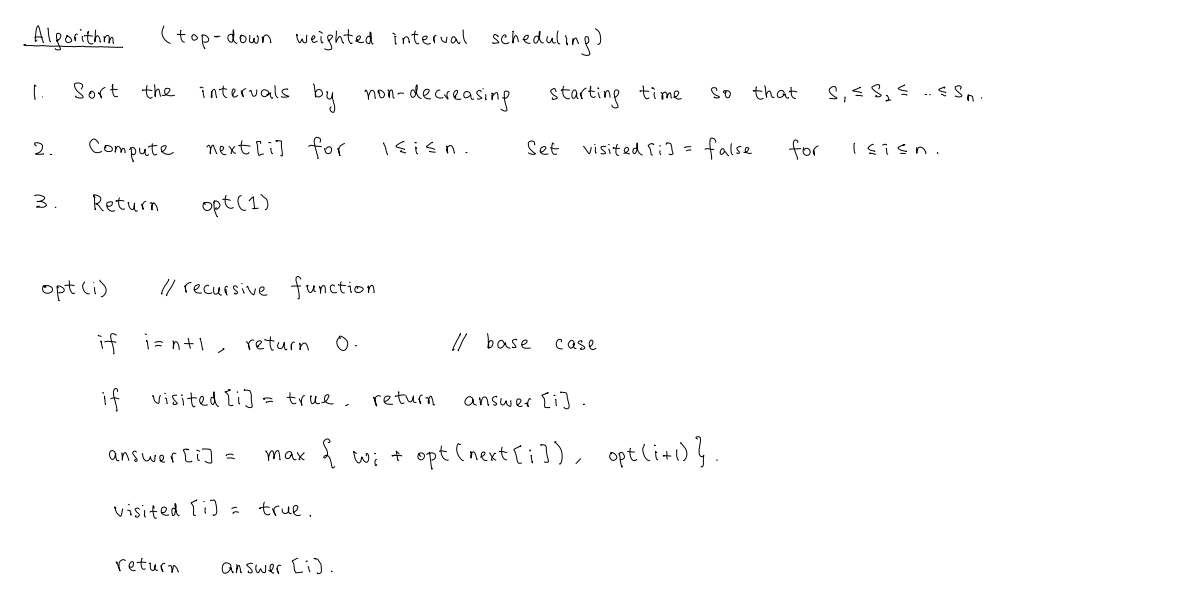

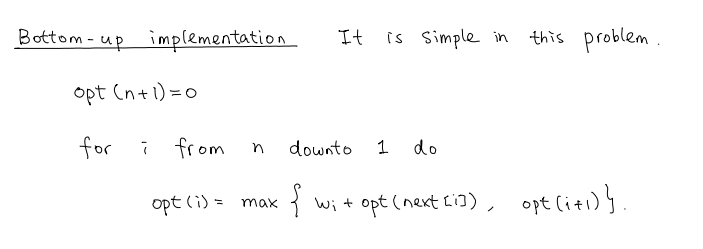

Weighted Interval Scheduling