Machine Epsilon

Denoted by . Learned this in CS370.

This maximum relative error measure of machine precision is called machine epsilon.

is the smallest number such that using ,

machine epsilon will depend on exactly how we determine from the real number , i.e., which slot we assign the real number to.

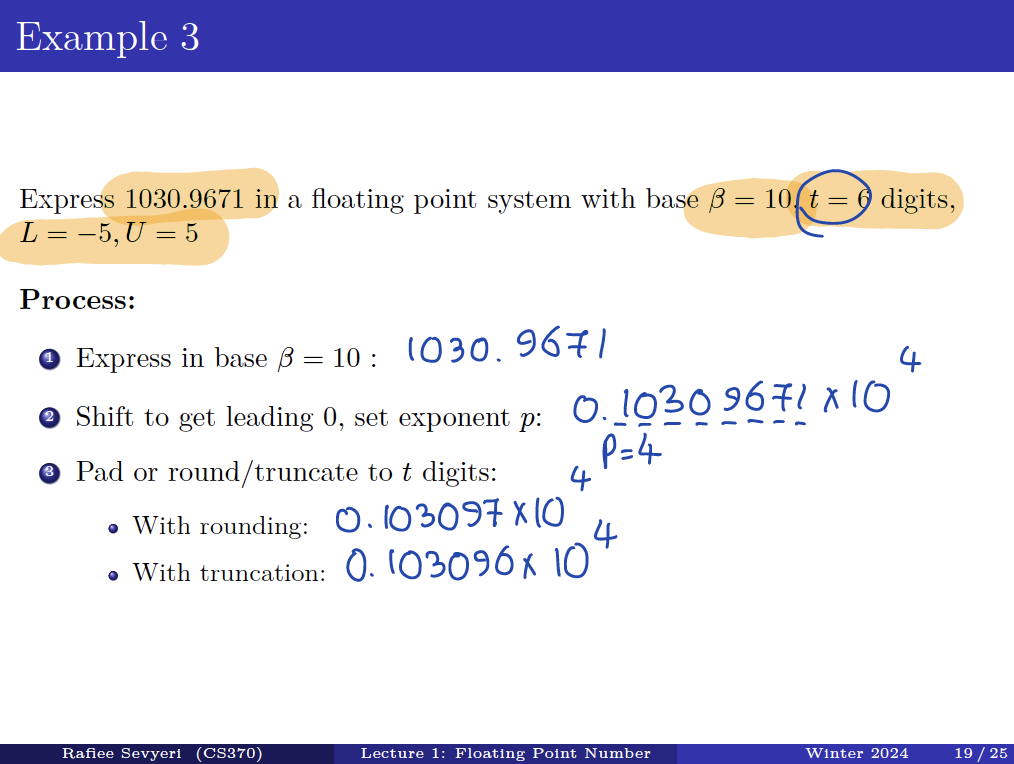

Rounding vs. Truncation

- Round-to-nearest – rounds to closest available number in .

- Usually the default.

- We’ll break ties by simply rounding up. (Various other options exist …)

- Truncation/“Chopping” – rounds to next number in towards zero. i.e. simply discard any digits after the t-th.

Example

If a computer uses base arithmetic with digits in the significand, i.e., , and uses round-to-nearest, then the value of machine epsilon (or unit round-off error) is If truncation is used instead, then E will be

In the real-world

In real-world floating point systems, there are additional choices available for rounding modes, including round-up, round-down, and round-towards-infinity, as well as options for tie-breaking under round-to-nearest (when the real value is halfway between two floating point numbers), such as round-to-odd, round-to-even, etc. For simplicity, this course will only consider truncation or round-to-nearest (with tie-breaking by rounding up, if it arises).

The signed relative error between any nonzero real number and its floating point representation can be written as a small multiple of (assuming ):

The signed relative error of using for is

which, by the design of , does not exceed in size. That is, Thus we have the relationship

- is some signed number which must satisfy

- is defined as some positive number that gives a bound on