Floating-Point Number System

Float number.

https://codingnest.com/the-little-things-comparing-floating-point-numbers/

First introduced through PMPP. Formally learned through CS370.

Representing a Real Number

Each positive number in can be represented in normalized form by

where

- are digits in base , that is, ;

- ‘normalized’ means ;

- exponent is an integer (positive, negative or zero).

The sequence of digits is called the mantissa (also significand).

Knowing this, we can define a Floating-Point Number System.

Floating Point Number System

Every floating point number system that we will consider can be characterized by four integer parameters, .

- is the base (ex: 10)

- is the number of digits in the mantissa (represents density / precision)

- and are bounds on the exponent (represents extent / range)

Thus, the numbers in such a system are precisely those of the form or (a very special floating point number)

In practice, there are 2 common standardized floating point systems:

- IEEE Single Precision (fp32)

- IEEE Double Precision (fp64)

See IEEE Floating Point Standard.

IEEE single precision: IEEE double precision:

There’s also FP16 (half-precision)

This is super confusing

Go see the IEEE Standard because they split numbers differently than how we see it at the beginning of this note. IEEE uses a normalization convention in which the first non-zero digit lies to the left of the decimal point, rather than the right.

Int vs Floats and Doubles

I was thinking like of an Integer, where the largest signed int is . But it’s actually more complicated for floats and doubles.

See IEEE Floating Point Standard for the 3 parts of the number (sign, exponent, mantissa)

Properties of Floating Point Numbers

Relationship between Real and Floating Point Numbers

This is really important to understand.

Generally case, because most real numbers cannot be represented exactly. In order to compute , we must modify to become a valid representable floating point number, typically by eliminating some of its smaller digits.

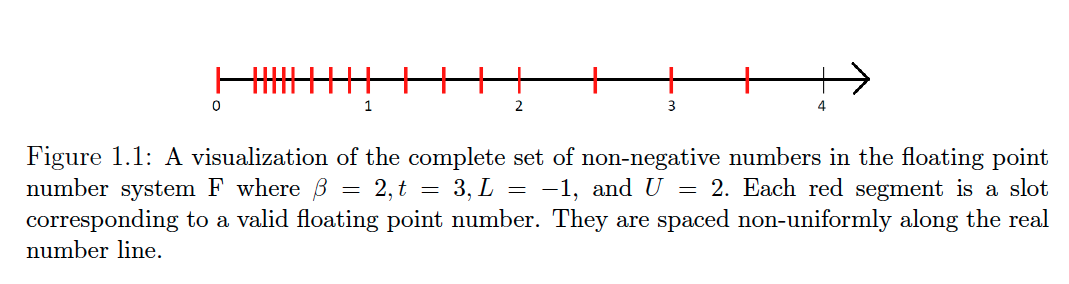

One way to think about going from real to floating number is laying it out in slots.

Important

Floating Point Numbers are not uniformly spaced. This is due to the way they are designed (see the equation at the beginning of the page).

Numbers that are closer to zero, which have more negative exponents, are spaced more closely together. Conversely, numbers with larger magnitudes, characterized by more positive exponents, are spaced further apart. See Fixed-Point Number System for fixed spacing.

You can see how the spacing is

The conversion from real to floating number is called round-off error.

See Machine Epsilon.