A Reduction of Imitation Learning and Structured Prediction to No-Regret Online Learning

export TS="$(date +%Y%m%d_%H%M%S)" && \

export DATASET_REPO_ID="${HF_USER}/eval_koch-tshirt-dagger-${TS}" && \

export INTERVENTION_REPO_ID="${HF_USER}/koch-tshirt-dagger-corrections-${TS}" && \

lerobot-record \

--robot.type=bi_koch_follower \

--robot.left_arm_port="$FOLLOWER_LEFT_PORT" \

--robot.right_arm_port="$FOLLOWER_RIGHT_PORT" \

--robot.id=bimanual_follower \

--robot.cameras="{ top: {type: opencv, index_or_path: $TOP_CAMERA_INDEX_OR_PATH, width: 640, height: 480, fps: 30}, left_wrist: {type: opencv, index_or_path: $LEFT_WRIST_CAMERA_INDEX_OR_PATH, width: 640, height: 480, fps: 30}, right_wrist: {type: opencv, index_or_path: $RIGHT_WRIST_CAMERA_INDEX_OR_PATH, width: 640, height: 480, fps: 30} }" \

--teleop.type=bi_koch_leader \

--teleop.left_arm_port="$LEADER_LEFT_PORT" \

--teleop.right_arm_port="$LEADER_RIGHT_PORT" \

--teleop.id=bimanual_leader \

--teleop.intervention_enabled=true \

--dataset.repo_id="$DATASET_REPO_ID" \

--dataset.single_task="Fold the t-shirt and put it in the bin" \

--dataset.num_episodes=10 \

--dataset.episode_time_s=60 \

--dataset.reset_time_s=15 \

--intervention_repo_id="$INTERVENTION_REPO_ID" \

--policy.path="${HF_USER}/act_policy_koch-tshirt-folding-v2" \

--display_data=true \

--teleop.inverse_follow=falseDAgger = dataset aggregation

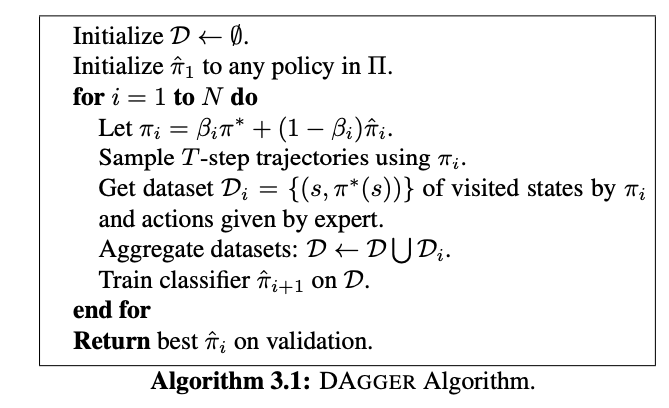

First heard about this paper when reading up on ALOHA.

Dagger "Helps reduce distribution shift for the learner", but how?

- They do a sort of Polyak Averaging

DAgger improves on behavioral cloning by training on a dataset that better resembles the observations the trained policy is likely to encounter, but it requires querying the expert online.

Krish M was mentioning this, and I was confusing it with IMPALA.