Diffusion Model

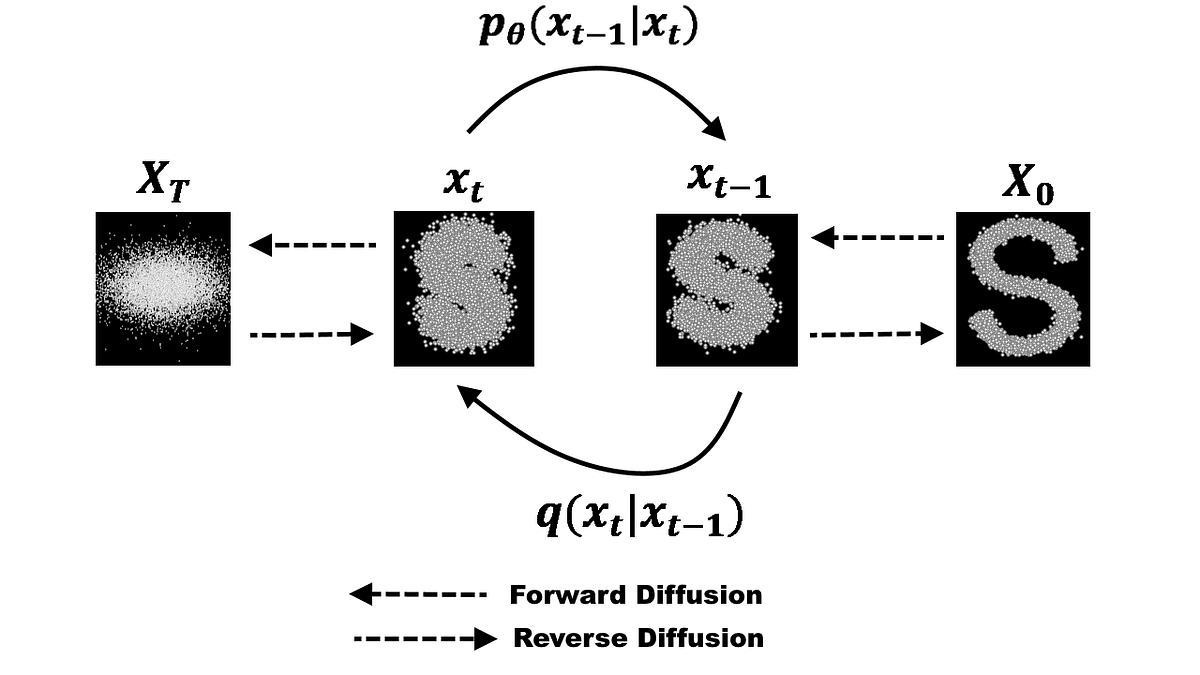

Add noise gradually and learn to reverse the process: . Can be interpreted as:

See DDPM for actual implementation detail notes. There’s also

Models

- DALL-E 2

- Stable Diffusion

- Imagen

Diffusion Models in Vision: https://arxiv.org/pdf/2006.11239.pdf

I worked on one for HackWestern.

- Stable Diffusion Animation: https://replicate.com/andreasjansson/stable-diffusion-animation/examples#nby3ut2bljhixb2gejkljmcib4

Walkthrough (CS231n 2025 Lec 14)

Diffusion terminology is famously a mess (DDPM, score matching, SDEs, flow matching, rectified flow, ε-prediction, v-prediction, …). CS231n 2025 introduces the modern Rectified Flow formulation first because it’s the cleanest, then shows the rest as a generalized diffusion template.

Intuition

- Pick a simple noise distribution (usually ).

- Corrupt data at varying noise levels to get ( no noise, full noise).

- Train a network to remove a little noise.

- At inference, sample and apply many times in sequence to land at a clean .

Rectified Flow training

The cleanest concrete instance — straight-line interpolation between data and noise (same idea as Flow Matching). Each iteration:

Train the network to predict the constant velocity :

Whole training loop is a few lines:

for x in dataset:

z = torch.randn_like(x)

t = random.uniform(0, 1)

xt = (1 - t) * x + t * z

v = model(xt, t)

loss = (z - x - v).square().sum()Rectified Flow sampling

Pick number of steps (often ). Start from noise and Euler-step backward:

sample = torch.randn(x_shape)

for t in torch.linspace(1, 0, num_steps):

v = model(sample, t)

sample = sample - v / num_stepsConditional Rectified Flow

Same loop, model takes a condition (class label, text embedding, etc.):

for (x, y) in dataset:

...

v = model(xt, y, t)

loss = (z - x - v).square().sum()Classifier-Free Guidance (CFG)

Question: how much should we emphasize conditioning ? CFG (Ho & Salimans 2022) trick: during training, randomly drop (replace with ) ~50% of the time. Now the same network is both conditional and unconditional.

At sampling, evaluate two velocities at each step and combine:

- — points toward

- — points toward

- — extrapolates more toward

Step according to . “Classifier-free” because earlier methods used a separately-trained discriminative classifier to compute step direction (Dhariwal & Nichol 2021). CFG is used everywhere in practice — critical for high-quality outputs — but doubles sampling cost (two model evals per step).

Optimal prediction & noise schedules

What is the network actually trying to predict?

- Many pairs can produce the same — the network must average over all of them.

- (full noise): optimal is the mean of — easy.

- (no noise): optimal is the mean of — easy.

- Middle noise is hardest, most ambiguous — but uniform sampling gives equal weight to all noise levels.

Solution: use a non-uniform noise schedule that emphasizes middle . Common choice — logit-normal sampling (Esser et al. 2024 “Scaling Rectified Flow Transformers”):

t = torch.randn(()).sigmoid()For high-resolution data, also shift to higher noise to account for pixel correlations.

Latent diffusion (LDMs) — see Latent Diffusion Model

Naive diffusion doesn’t scale to high-resolution pixels. Modern pipelines diffuse in a compressed latent (typically a VAE + GAN-trained autoencoder, , , so → latents). LDMs are the standard form today.

Diffusion Transformer (DiT) — Peebles & Xie ICCV 2023

Diffusion uses standard Transformer blocks; the design question is how to inject conditioning (timestep , text, class label):

- Predict scale/shift (adaLN-Zero) — most common for the diffusion timestep .

- Cross-attention / joint attention — common for text and image conditioning.

- Concatenate on sequence dimension — alternative.

Text-to-Image example: FLUX.1 [dev]

- Text encoder: T5 + CLIP

- VAE encoder/decoder: 8× downsampling

- Diffusion: 12B-parameter DiT, patchify → image tokens

- image ↔ latents

Text-to-Video — same recipe, video latents

Add a temporal axis: latents are . Meta MovieGen (2024): 30B-param DiT, patchify → 76K tokens, outputs video. The era of large video diffusion models exploded in 2024 (Sora, Gen3, Dream Machine, MovieGen, CogVideoX, Mochi, Veo 2, Wan, Cosmos, Kling 2.0 …).

Diffusion distillation

Sampling needs ~30–50 model evals per image — slow. Distillation algorithms (Progressive Distillation 2022, Consistency Models 2023, Adversarial Diffusion Distillation 2024, Multistep Distillation via Moment Matching 2025) collapse this down to a few steps or even 1 step, and can bake CFG into the student.

Generalized Diffusion

All diffusion variants share a template — only the coefficient functions differ:

| Variant | ||||

|---|---|---|---|---|

| Rectified Flow | ||||

| Variance Preserving (VP) (Song et al. ICLR 2021) | varies | varies | ||

| Variance Exploding (VE) (Karras et al. NeurIPS 2022) | varies | varies |

Prediction targets:

- x-prediction: ()

- ε-prediction (DDPM): ()

- v-prediction (Salimans & Ho 2022): ()

Coefficients are usually picked through some mathematical formalism (SDEs, ODEs, score matching).

Other perspectives on diffusion

Sander Dieleman’s blog post (https://sander.ai/2023/07/20/perspectives.html) lists 8 equivalent views:

- Diffusion = autoencoders

- Diffusion = deep latent variable models (variational lower bound, same as VAE — see Deep Latent Variable Model)

- Diffusion learns the score function — a vector field pointing toward high-density regions; train a net to approximate the score of . See Score Matching.

- Diffusion solves reverse SDEs

- Diffusion = flow-based models — see Flow Matching, Flow-Based Model

- Diffusion = recurrent neural networks (iterated function)

- Diffusion = autoregressive models (over noise levels)

- Diffusion estimates expectations

Autoregressive models strike back

Pixel-AR was abandoned because raw pixels are too long a sequence (3M for 1024² RGB). But autoregressive models work great on (discrete) latents: train a VQ-VAE-style encoder/decoder with discrete latents (van den Oord 2017, Razavi VQ-VAE-2, ESSER VQGAN, Yu 2022 Parti), then run an AR Transformer over the latent token sequence.

Source

CS231n 2025 Lec 14 slides ~36–123 (intuition slide, Rectified Flow training+sampling+conditional, CFG, optimal prediction + noise schedules + logit-normal, LDMs end-to-end including VAE+GAN+diffusion combo, DiT conditioning variants, text-to-image FLUX, text-to-video MovieGen + 2024 video era timeline, distillation, generalized diffusion template + VP/VE/x/ε/v variants, latent-variable / score / SDE perspectives, AR-strikes-back via discrete latents).