Generative Model

You have GANs and Diffusion Model that can generate data. There’s also GPT-3.

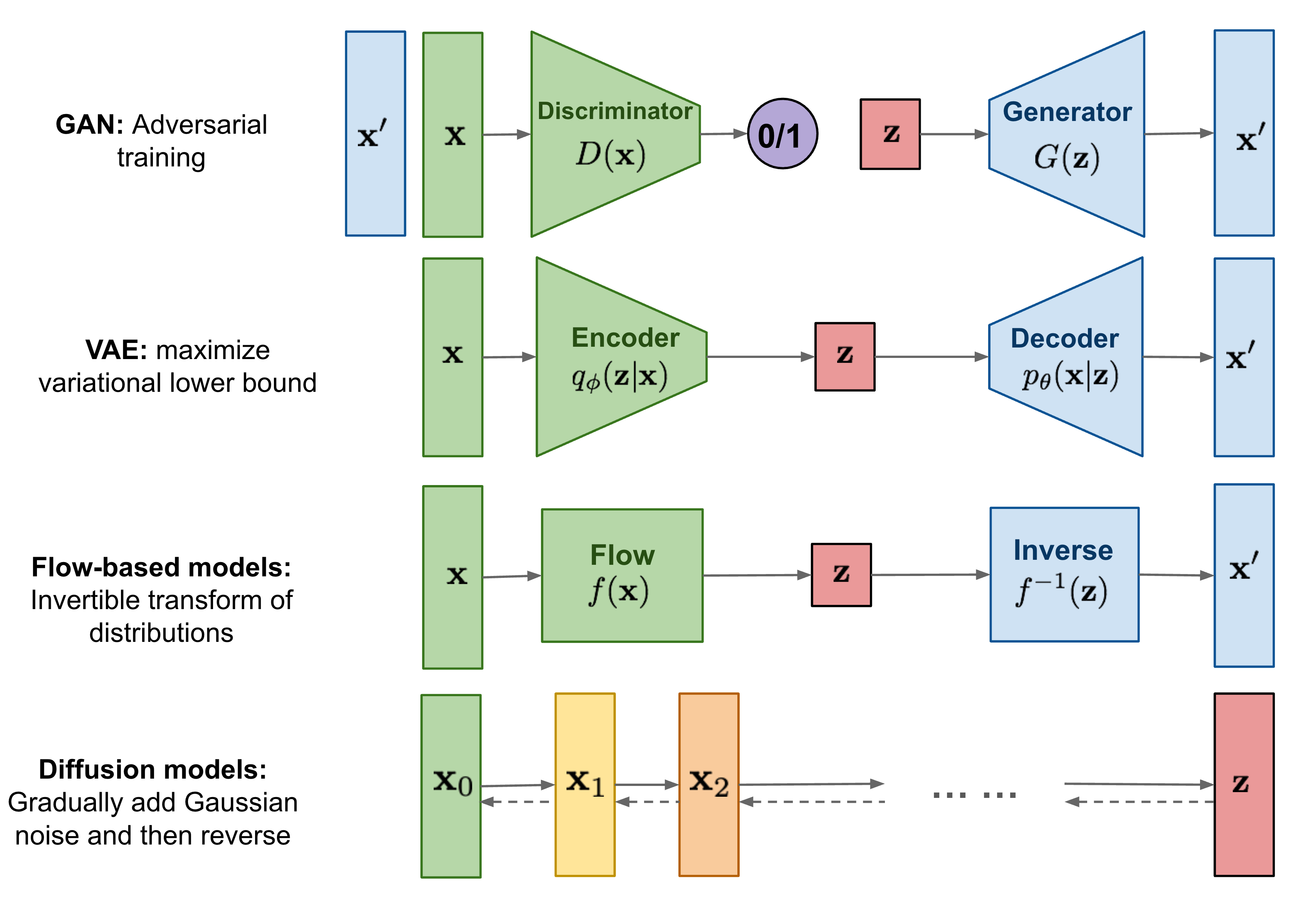

Types of generative models (source):

- Likelihood-based models: approximate the probability distribution . Ex:

- Implicit / Score-Based Models: do not model explicitly, or use alternative objectives to generate samples. Ex:

- Generative Adversarial Network (GAN):

- Diffusion Models: .

- Energy-Based Model (EBM):

I still don't fully get the difference....?

It’s not about starting from noise and then denoisinig.

What is

p(x)?

- is the probability density (or mass) of a data point under your model.

- It tells you how likely your model thinks that is.

Does this matter anymore?

Like just slap a transformer and feed data, does this really matter? The architecture is becoming standardized (transformers), but the generative modeling paradigm — diffusion vs autoregressive vs GAN — still shapes what the model does and how it learns.

https://lilianweng.github.io/posts/2021-07-11-diffusion-models/ https://yang-song.net/blog/2021/score/

- From lilian wag’s blog, it seems that all of these are really similar.

Taxonomy (CS231n 2025 Lec 13)

CS231n uses the Goodfellow 2017 tree — splits by how the model relates to the density , with the normalization constraint implying different values of compete for probability mass:

Generative models

/ \

Explicit density Implicit density

(model computes (can only sample

p(x)) from p(x))

/ \ / \

Tractable Approximate Direct Indirect

↓ ↓ ↓ ↓

Autoregressive VAE GAN Diffusion

- Tractable — actually evaluate (e.g. Autoregressive via chain rule).

- Approximate — can’t evaluate exactly but bound/approximate it (VAE: maximize ELBO instead of ).

- Direct implicit — single-shot sample from a noise vector (GAN generator).

- Indirect implicit — iterative sampling procedure (diffusion: denoise times).

Discriminative vs generative vs conditional-generative:

| Models | Used for | |

|---|---|---|

| Discriminative | classification | |

| Generative | density / sampling / anomaly detection | |

| Conditional generative | class-conditional / text-to-image generation |

Bayes ties them: , so a generative model + a class prior gives a discriminative one.

Source

CS231n 2025 Lec 13 slides ~35–47, 113–115 (discriminative/generative/conditional split, density normalization, taxonomy tree, Goodfellow attribution).

Generative Video Models

Do generative video models understand physics?

- https://arxiv.org/pdf/2501.09038 this paper proves NO

It’s just learning to correlate frames, but it has no understanding of the world’s physics.