Transposed Convolution

In Pytorch, use ConvTranspose2d

I saw this through U-Net.

Resources

- https://d2l.ai/chapter_computer-vision/transposed-conv.html

- https://pytorch.org/docs/stable/generated/torch.nn.ConvTranspose2d.html

Learnable upsampling (CS231n 2025 Lec 9)

Strided conv with stride downsamples by factor . Transposed conv inverts this — it upsamples by factor using a learned kernel.

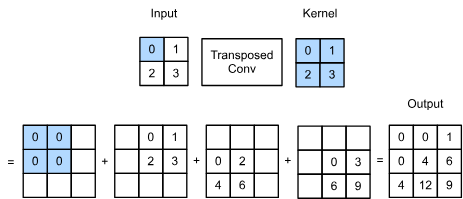

How it works

Each input element scales the filter and writes a copy into the output; where output regions overlap (because of the stride), the values are summed. With kernel size 3, stride 2, padding 1, a 1D input and filter produces:

The middle output cell receives contributions from both inputs — that’s the “sum where overlaps” rule.

Equivalent matrix form

A standard 1D conv with stride 2 is multiplication by a sparse matrix (kernel weights placed along the diagonal, shifted by the stride). Transposed conv is multiplication by :

So “transposed convolution” really is the transpose of the conv matrix — that’s where the name comes from. Other names you’ll see: deconvolution (misleading — it’s not the mathematical inverse of convolution), upconvolution, fractionally-strided convolution, backward-strided convolution.

Where it’s used

Decoder upsampling stages in semantic segmentation networks (FCN, U-Net), generators in GANs, and the upsampling path in VAEs.

Source

CS231n 2025 Lec 9 slides 50–69, 151–152 (learnable upsampling, transposed conv 1D example, conv as matrix multiplication). 2026 PDF not published — using 2025 fallback.