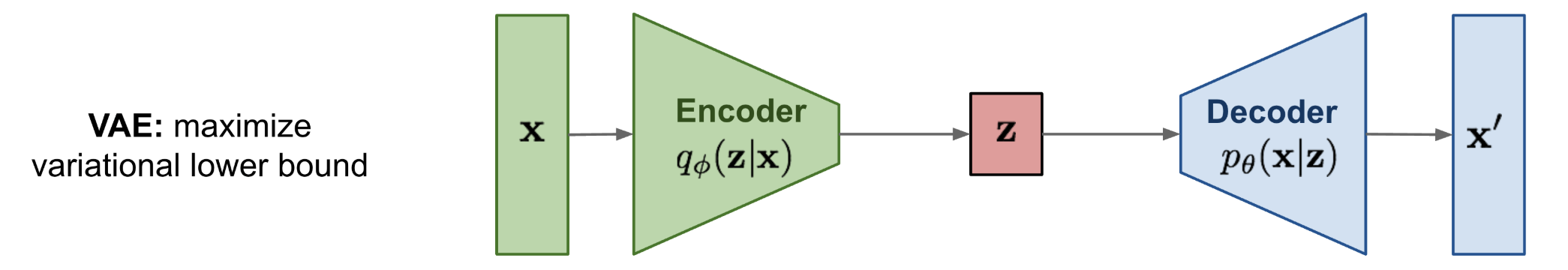

Variational Autoencoder (VAE)

A VAE is a latent variable model trained with variational inference: .

Intuition

An autoencoder that forces the latent space to be a well-behaved distribution (usually a unit Gaussian). The KL term pulls the encoder output toward that prior so sampling works; the reconstruction term pushes it to stay informative enough to rebuild the input. The reparameterization trick lets gradients flow through a random sample by pulling the randomness out of the computation graph as a fixed .

What's the reparameterization trick buying us?

Sampling from a learned distribution is non-differentiable, which breaks backprop. The trick rewrites with , so gradients flow through while randomness lives only in

I got the intuition for VAEs here https://chatgpt.com/share/693a58cf-e2e4-8002-9fea-eb7fad7817b1. A VAE tries to map the original distribution to a Gaussian and back, with both mappings encoded in the loss function.

This is a variant of the Autoencoder that is much more powerful, using distributions to represent features in its bottleneck.

Resources

It's basically an Autoencoder but we add Gaussian noise to latent variable

z?Key difference:

- Regular Autoencoder: Input → Encoder → Fixed latent representation → Decoder → Reconstruction

- VAE: Input → Encoder → Latent distribution → Sample from distribution (adds Gaussian noise via reparameterization trick) → Decoder → Reconstruction

Variational autoencoders provide a principled framework for learning deep latent-variable models and corresponding inference models.

Process

Forward Pass (Encoding → Sampling → Decoding)

- Encoder:

Input data , outputs parameters (mean and variance) of latent distribution :

- Reparametrization Trick: Differentiably sample latent variable :

- Decoder:

Reconstruct data from sampled latent vector :

Loss Function (Negative ELBO):

Optimize encoder and decoder parameters by minimizing:

Read the two terms as a tug-of-war. Reconstruction says “encode into a sharp so the decoder can recover it”; KL says “but make look like the prior , so I can sample from at test time and still land on sensible values.” The balance is what makes the latent space both informative and samplable.

Notes from the guide

The VAE can be viewed as two coupled, but independently parameterized models:

- encoder (recognition model)

- decoder (generative model)

Motivation

We want to maximize the log likelihood To make this a generative process, we want it conditioned on some known probability distribution (so then it becomes mapping probability distribution to ) (else it’s just like an Autoencoder, always deterministic).

We expand out :

However, this is NOT tractable. Trying every single value for is infeasible since is implemented as a neural net.

I'm confused on what is tractable and what is not tractable?

- is tractable - Simple prior to generate (i.e. unit gaussian)

- is tractable- simple neural net to general conditioned on

- is NOT tractable - need to integrate over all

- is NOT tractable because it needs applying Bayes Rule

So what do we do? We approximate the intractable posterior with and maximize a tractable lower bound (ELBO) on the true log-likelihood.

We approximate and then optimize by maximizing

Variants

Walkthrough (CS231n 2025 Lec 13)

Motivation: from (non-variational) autoencoder to VAE

A plain autoencoder learns features by training encoder + decoder to reconstruct with L2 loss (no labels, useful for downstream tasks). But as a generative model it fails:

- If we throw away the encoder and sample , we need a new . Generating is no easier than generating , since the encoder has squeezed into whatever weird-shaped manifold occupies

- Fix: force to come from a known distribution (e.g. ) so we can sample it trivially

Probabilistic setup

- Prior : simple, samplable

- Decoder : a neural net outputting a mean, so , meaning maximizing log-likelihood under a Gaussian decoder equals minimizing L2 reconstruction

- Encoder : a neural net outputting mean + (diagonal) variance of the approximate posterior

Marginal is intractable (can’t integrate over all ). Bayes’ rule has which is also intractable. So we introduce and maximize the ELBO instead.

Training objective

Both terms are closed-form for Gaussians (the KL between two diagonal Gaussians has a known formula), except the expectation over , which we Monte-Carlo with the reparametrization trick , .

Training loop

- encoder

- Prior loss: KL-pull toward

- Sample via reparametrization (so gradients flow through )

- decoder

- Reconstruction loss: L2 between and

The two losses fight each other

- Reconstruction wants and a unique for each (so the decoder can reconstruct deterministically, essentially a plain autoencoder)

- Prior matching wants and for every (so and sampling is easy)

The balance between them is what makes VAEs generative rather than just compressive.

Sampling

At inference, throw away the encoder. Sample , run through the decoder, get a new image.

Disentangling

Because the prior has a diagonal covariance, the dimensions of are forced to be independent. Walking one dimension at a time often maps to a human-interpretable factor (digit identity, stroke width, pose). Kingma & Welling’s ICLR 2014 MNIST grid, varying vs to smoothly trace through digit classes and styles, is the canonical demo.

From CS231n 2025 Lec 13 slides ~62-112 (non-variational AE recap, motivation for forcing known prior, encoder/decoder Gaussian parameterization, log-likelihood L2 equivalence, ELBO derivation via Bayes + multiply-by-, training steps with KL prior / reparametrization / reconstruction, “losses fight” interpretation, sampling procedure, disentangling MNIST grid).