ORB-SLAM

This is a way to implement Visual SLAM that Soham told me about.

Writing this from scratch https://arxiv.org/pdf/1502.00956.pdf

Helpful Slides?

My personal notes

- here is the edge weight, represents the number of shared map points between two keyframes

- An edge exists if two keyframes share at least 15 keypoints

Visual SLAM book notes

The Visual SLAM book gives a gentle introduction to ORB SLAM in a few paragraphs.

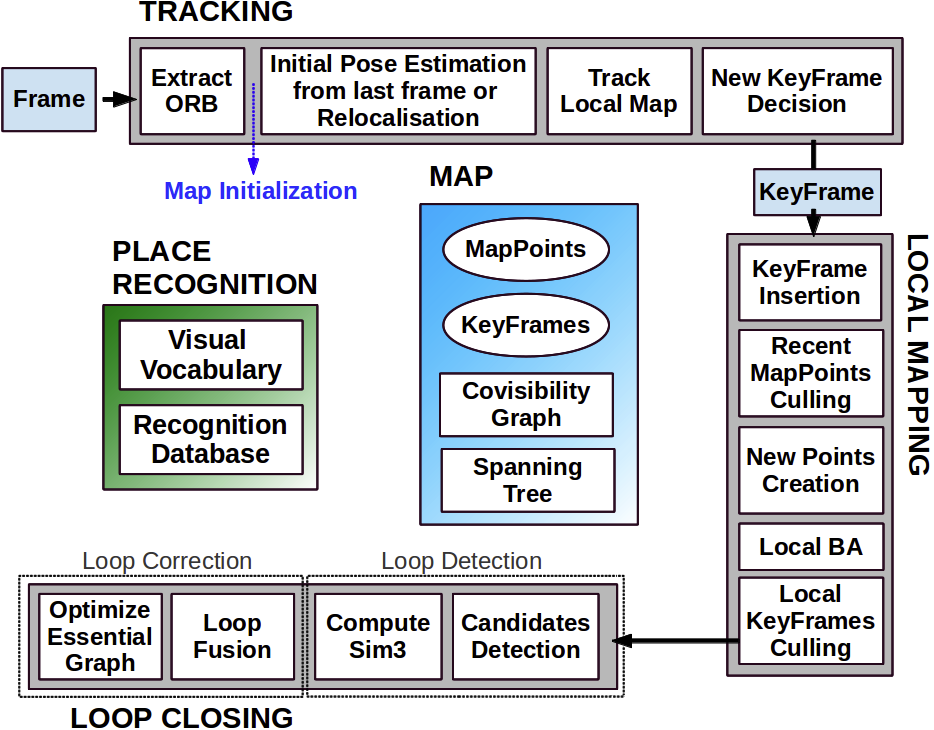

Basically, you have 3 threads running in parallel:

- Tracking thread: for real-time tracking of feature points

- The tracking thread is responsible for extracting ORB feature points for each new image, comparing it with the most recent keyframe, calculating the feature points’ location, and roughly estimating the camera pose

- Decides whether to introduce a new Keyframe

- Local Mapping thread: Does local bundle adjustment (co-visibility Graph, commonly known as the small graph)

- The small graph solves a local bundle adjustment problem, including the feature points in the local space and the camera pose.

- Responsible for refining camera poses and spatial locations of feature points.

- Loop Closing thread: for Global pose graph optimization (essential graph, known as the big graph)

- performs loop detection on the global map and keyframes to eliminate accumulated errors.

The tracking and local mapping threads construct good visual odometry.

ORBSLAM

- Monocular SLAM (Simultaneous Localization and Mapping).

- Initial version with basic features.

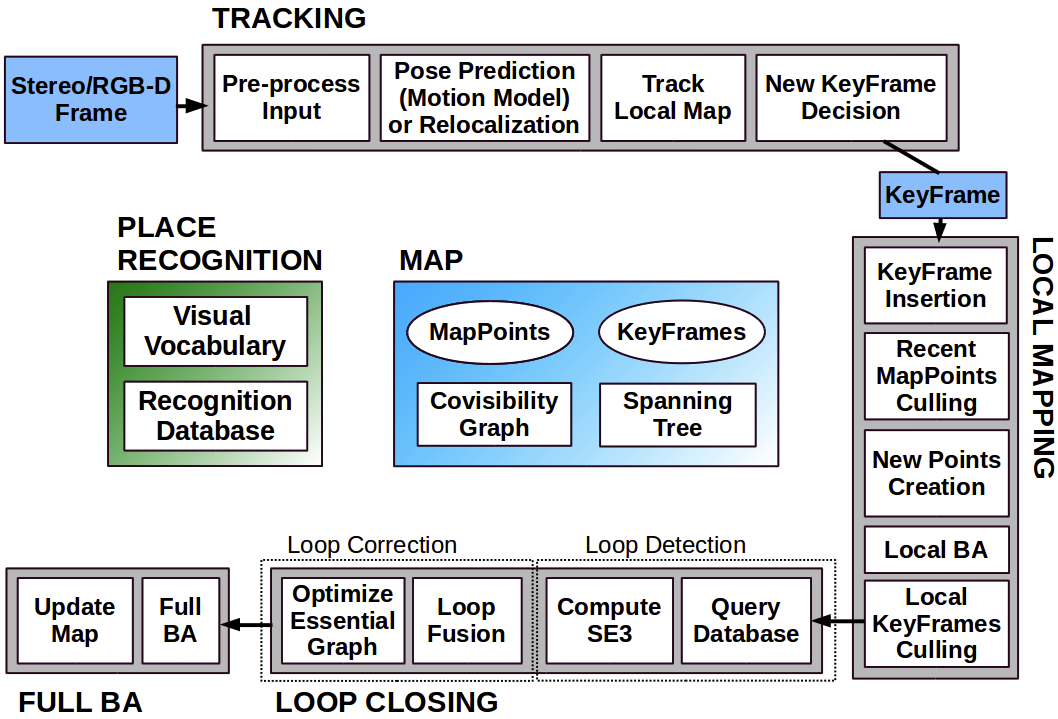

ORBSLAM2

- https://arxiv.org/pdf/1610.06475.pdf

- Locally: file:///Users/stevengong/My%20Drive/Books/ORB-SLAM2%20Paper.pdf

- Supports monocular, stereo, and RGB-D cameras.

- Improved loop closure, relocalization, and map reuse.

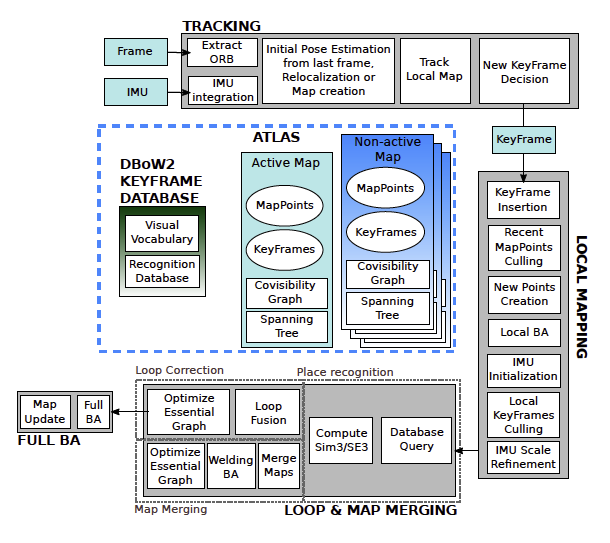

ORBSLAM3

- https://arxiv.org/pdf/2007.11898.pdf

- Locally: file:///Users/stevengong/My%20Drive/Books/ORB-SLAM3%20Paper.pdf

- Advanced multi-map merging.

- Real-time performance with very large environments.

- Supports monocular-inertial sensors for improved tracking.

3 Threads in parallel:

- Tracking

- Local Mapping

- Loop Closing

Place recognition = ability to recognize previously visited locations in the map

Keyframe

“keyframe” is an individual frame (image) that has been selected as particularly useful or informative for the SLAM algorithm.

First core idea: Feature selection When you are looking at features to track, there are Invariants that we are looking out for.

- Scale invariance

- Rotation invariance

This excludes BRIEF and LDB. ORB is a good candidate.