Policy Evaluation

Problem: Evaluate a given policy Solution: Iterative application of Bellman Expectation Backup

- Using synchronous backups,

- At each iteration, for all states Update from , where is a successor state of

- Make sure to know this equation by heart (bellman expectation backup), and the difference with the bellman optimality equation

- You should also know for the deterministic Value Function

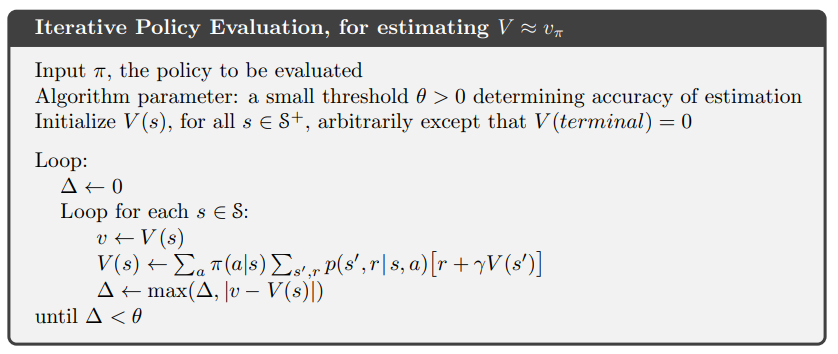

Pseudocode

Question: from the stanford thing, they tell us to initialize to 0, but here its random except the terminal value function is also 0. Is it just for faster convergence?#gap-in-knowledge