RGB-D Camera

RGB-D cameras captures information about the light field emanating from a scene. This contrasts with conventional cameras, which record only light intensity.

What is a light field?

RGB-D cameras capture both the intensity of light in a scene but also the precise direction that the light rays are traveling in space.

In Practice

I’ve heard from various people that RealSense cameras are actually terrible to work with. They have an FPGA built into them.

From SLAM Book (p.9)

RGB-D cameras are a relatively a new type of camera rising since 2010.

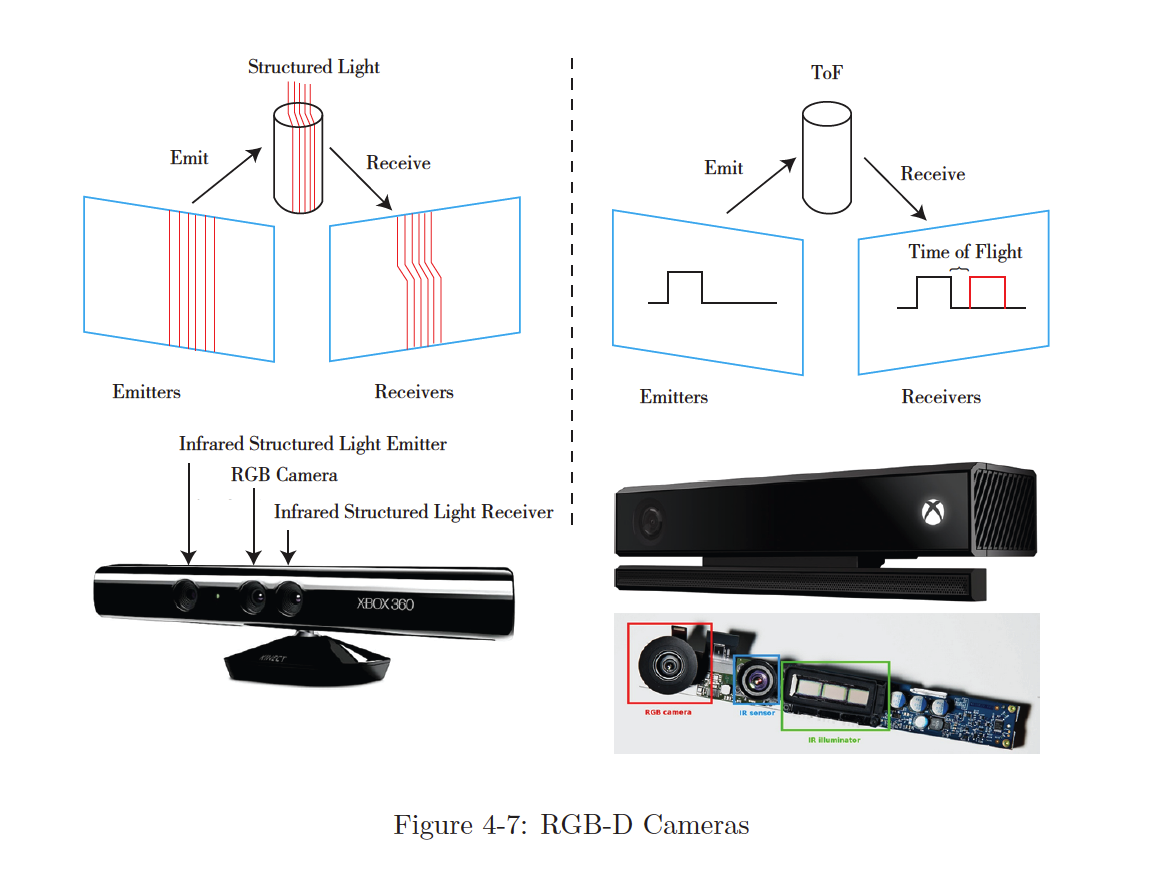

RGB-D cameras adopt infrared structure of light or Time-of-Flight (ToF) principles and measure the distance between objects and the camera by actively emitting light to the object and receive the returned light. This part is not solved by software as a stereo camera, but by physical sensors to save many computational resources compared to stereo cameras.

Common RGB-D cameras include

- Kinect / Kinect V2

- Xtion Pro Live

- RealSense, etc.

Problems

RGB-D cameras suffer from issues including narrow measurement range, noisy data, small field of view, susceptibility to sunlight interference, and unable to measure transparent material.

RGB-D cameras are mainly used in indoor environments and are not suitable for outdoor applications.

- Note that this book is written in 2016. The statements may be outdated in future

Two types of RGB-D cameras:

- The first kind of RGB-D sensor uses structured infrared light to measure pixel distance. Many of the old RGB-D sensors are belong to this kind, for example, the Kinect 1st generation, Project Tango 1st generation, Intel RealSense, etc.

- The second kind measures pixel distance using the time-of-flight (ToF). Examples are Kinect 2 and some existing ToF sensors in cellphones.