Camera

See Camera Pinhole Model.

Resources

- Camera Basics and Propagation of Light (Cyrill Stachniss, 2021)

- Slides here

- Fei-Fei Li CS231n first lecture

Types of Cameras

For Computer Vision

For Filmmaking

Other types of Camera

A camera is literally just projecting a bunch of 3d points onto a 2d plane. DUH.

Lidar keeps that information.

This is so cool, there is a 3D camera: Light Field Camera

Check out these set of slides, they are great quality (CSC420 from UofT):

You should really understand how a camera works:

Concepts

Mirrorless

Vision

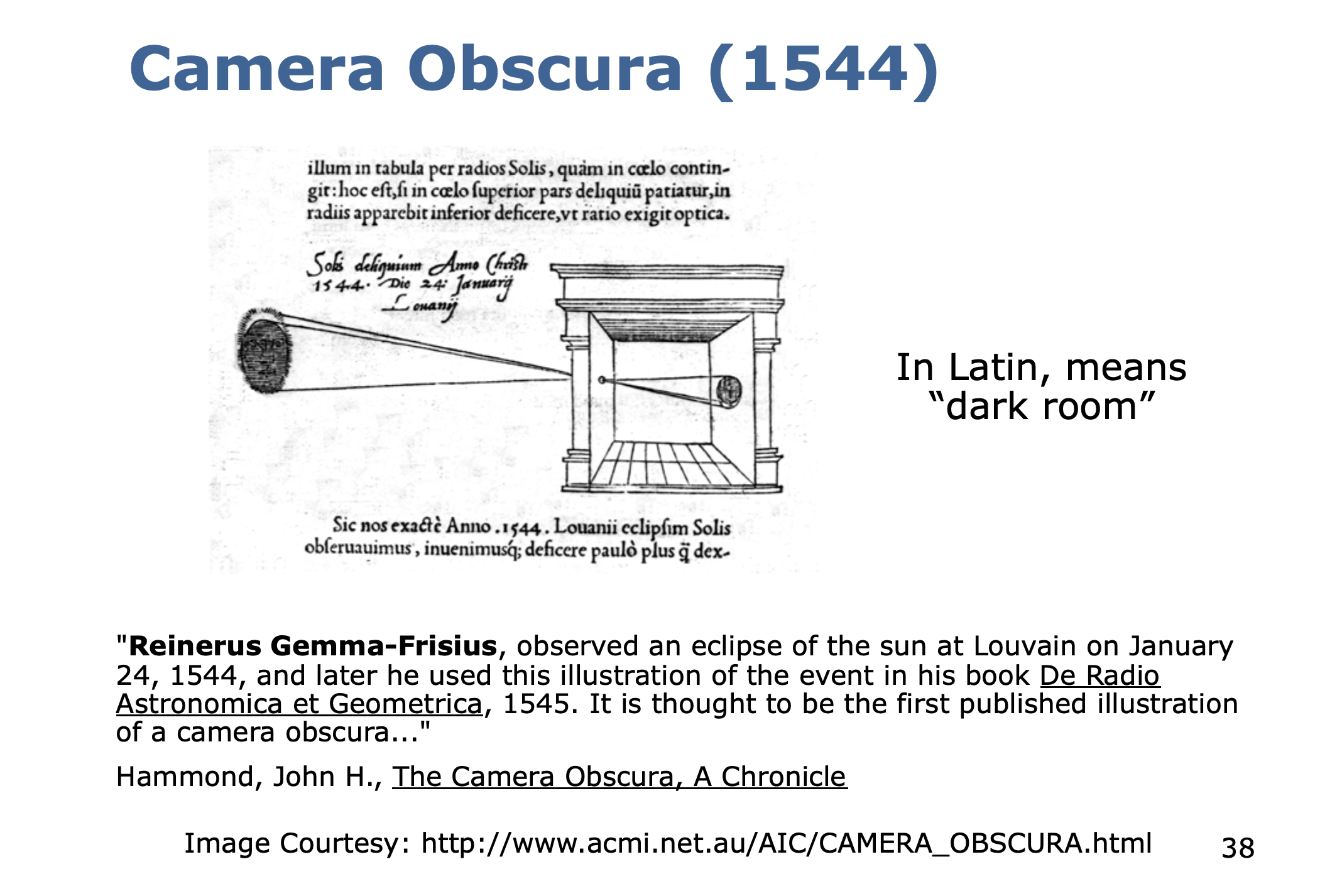

Beginning of cameras came from Leonardo Da Vinci.

- Your primary visual cortex is the farthest from your eye. Interestingly, for ears its right behind ears, for nose its right behind nose

- 50% of your brain is working on vision

- Vision is the hardest and most important sensory perceptual cognitive system in the brain.

Vision is hierarchical. So a lot of the research before Deep Learning and ConvNet was focused on learning these features.

We are trying to solve the Recognition problem in Vision, that’s where it’s hot. But the quest for vision goes beyond recognition. Vision and robotics. 2D to 3D. Deep understanding of a picture.

Notes from Cyrill Stachniss

The only background I had was watching that CS231N lecture on cameras, and the Visual SLAM book.

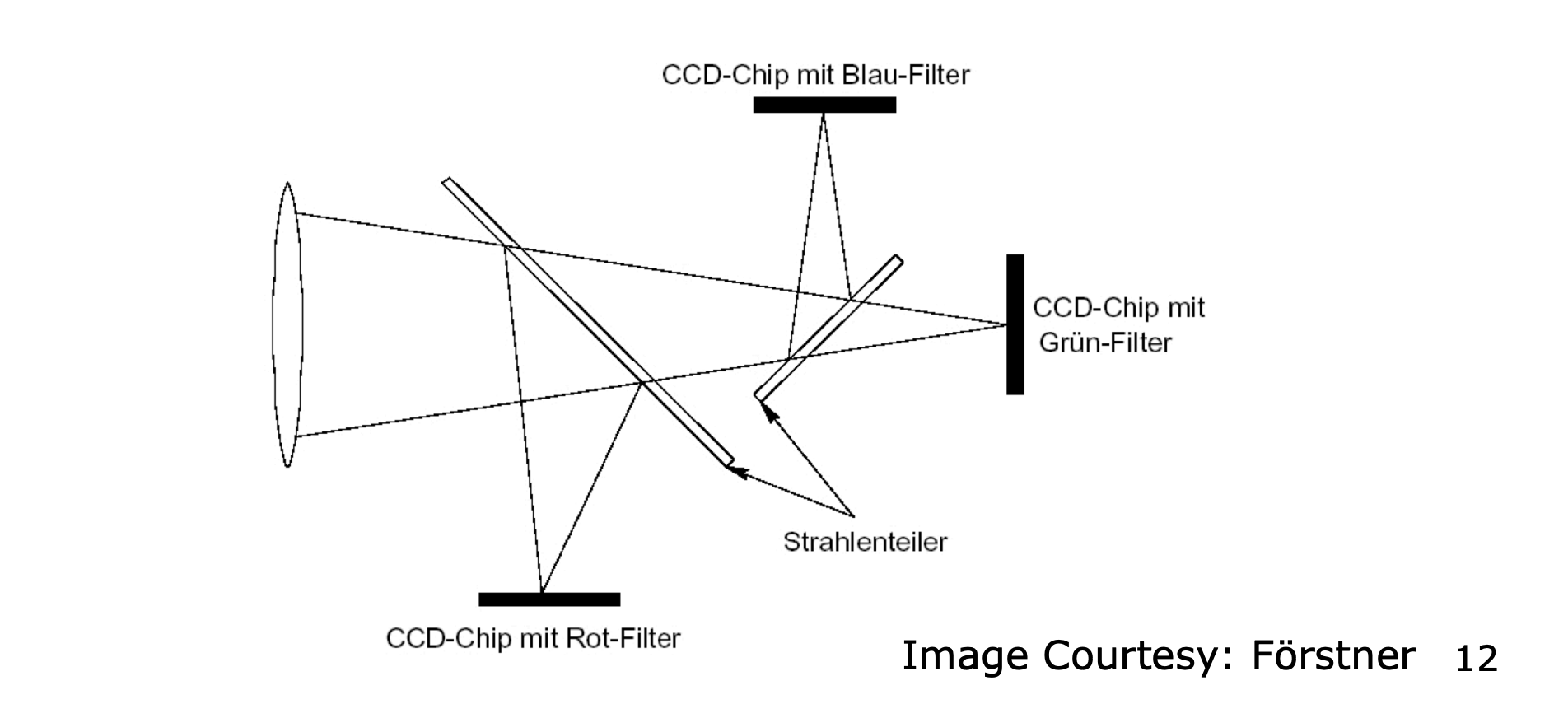

But I like that this is more depth. We can first split the camera into 2 pipelines: the physical and electrical

Lens and Camera Body (Physical Components)

- Lens → Aperture → Shutter

Sensor chip (Electrical Components)

- Sensor → A/D converter → post processing

Explanation of the camera sensor Camera Sensor.

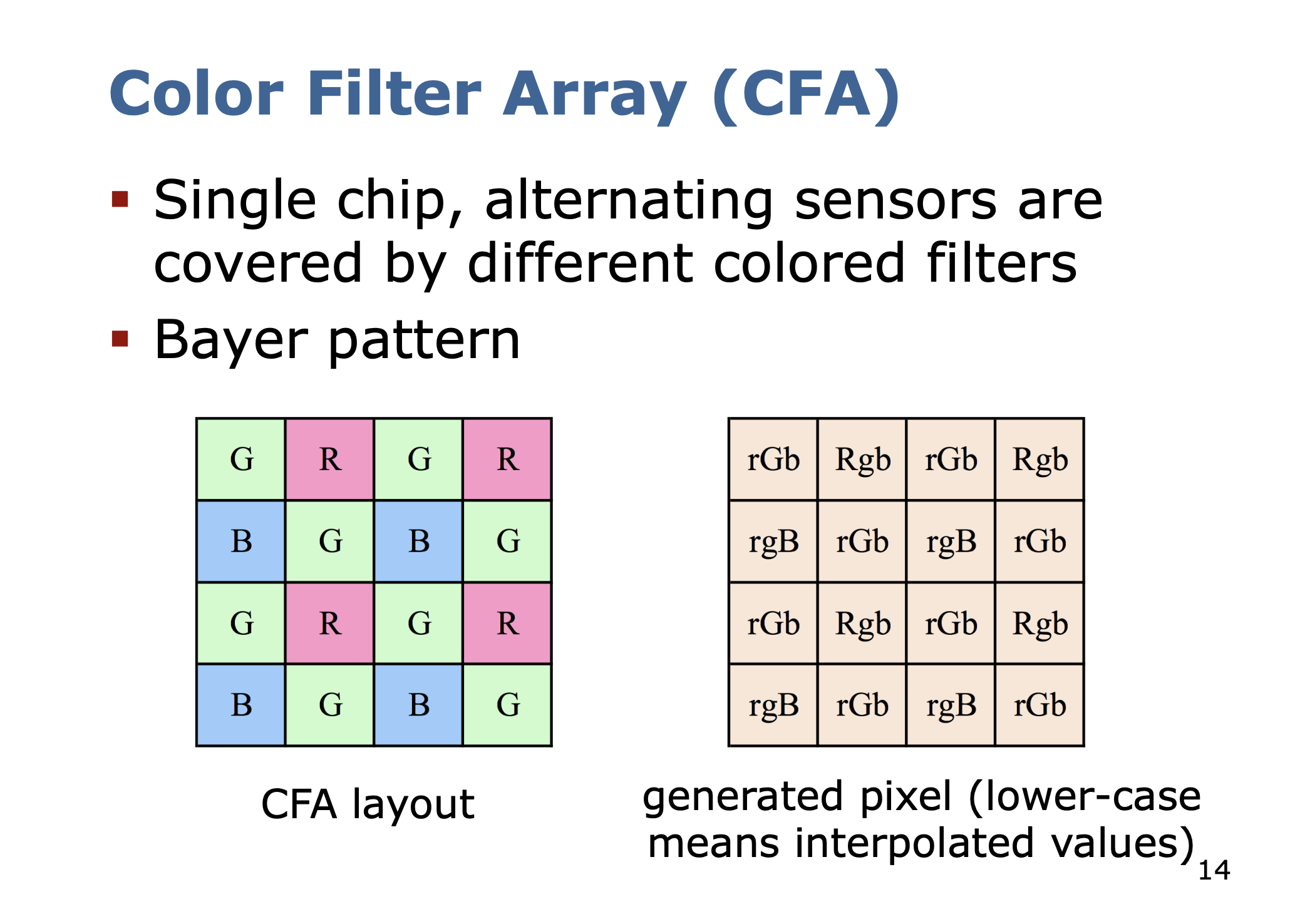

Our cameras have colors. How does this work?

Demosaicing

Interpolating the missing color values to obtain RGB values for all the pixels is called Demosaicing.

Shutter

Lens and Aperture

We need to quickly understand how light propagates, to understand why we need lenses.

3 Models to describe light propagation in Physics:

- Geometric or ray optics

- Wave optics based on Maxwell’s equations

- Particle/quantum optics based on the wave–particle duality

If you actually need to understand how the signal is translated into electrical signal, you need the 3rd. That’s like DSP.

4 axioms of geometric optics

- A light ray is a straight line in homogenous material

- At the border between two homogenous materials, the light is reflected (Fresnel reflection) or refracted (Snell’s law)

- The optical path is reversible

- Intersecting light rays do not influence each other

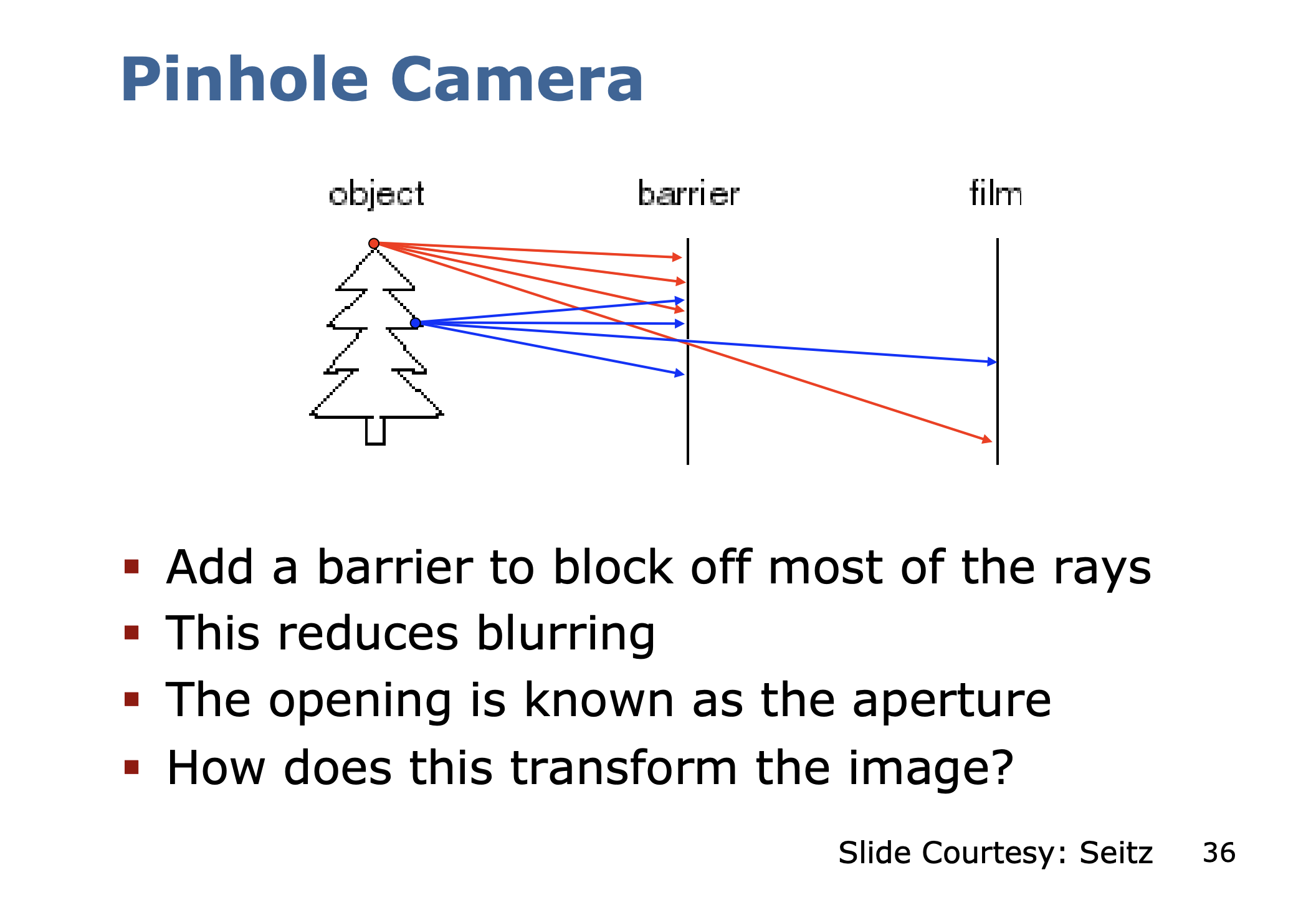

The system below does not work

Ahh, I finally understand why. Because the rays go everywhere on the film.

- Our eyes work in a similar way

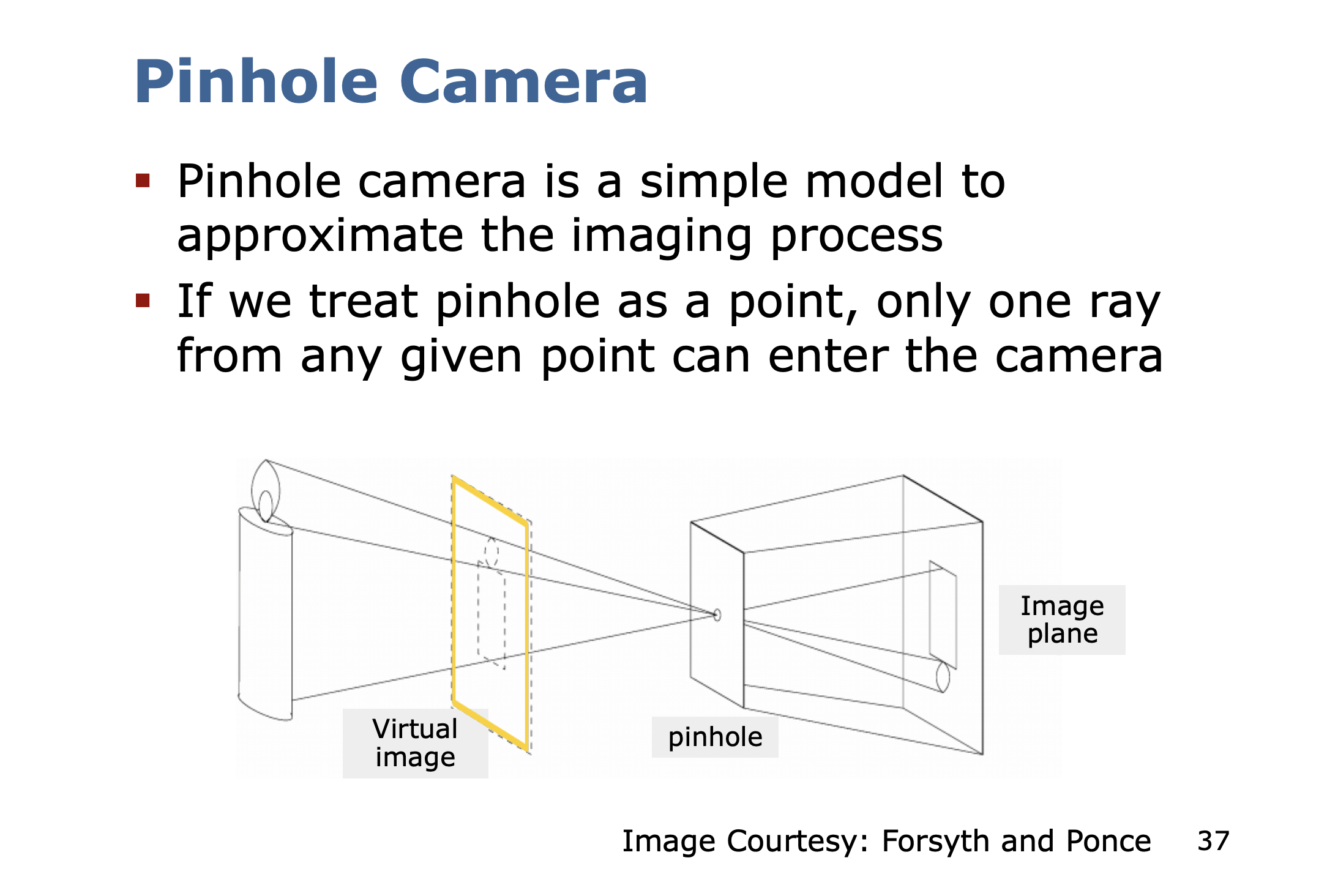

The image is actually flipped.

This is an awesome phenomenon where in a completely dark room, you can see an image being projected through the pinhole.

It was used to observe the sun.

And you can do this at home!!

Small vs. big holes for the pinhole camera

- Small pinhole: sharp image but requires large exposure times

- Large pinhole: short exposure times but blurry images

Why do images get blurry with a larger pinhole?

Because if you think back to the tree drawing, there are different light rays interfering from other parts of the tree.

There’s surprisingly a solution!

- replace pinhole by lenses

- I don’t understand how the lens can do this mapping well

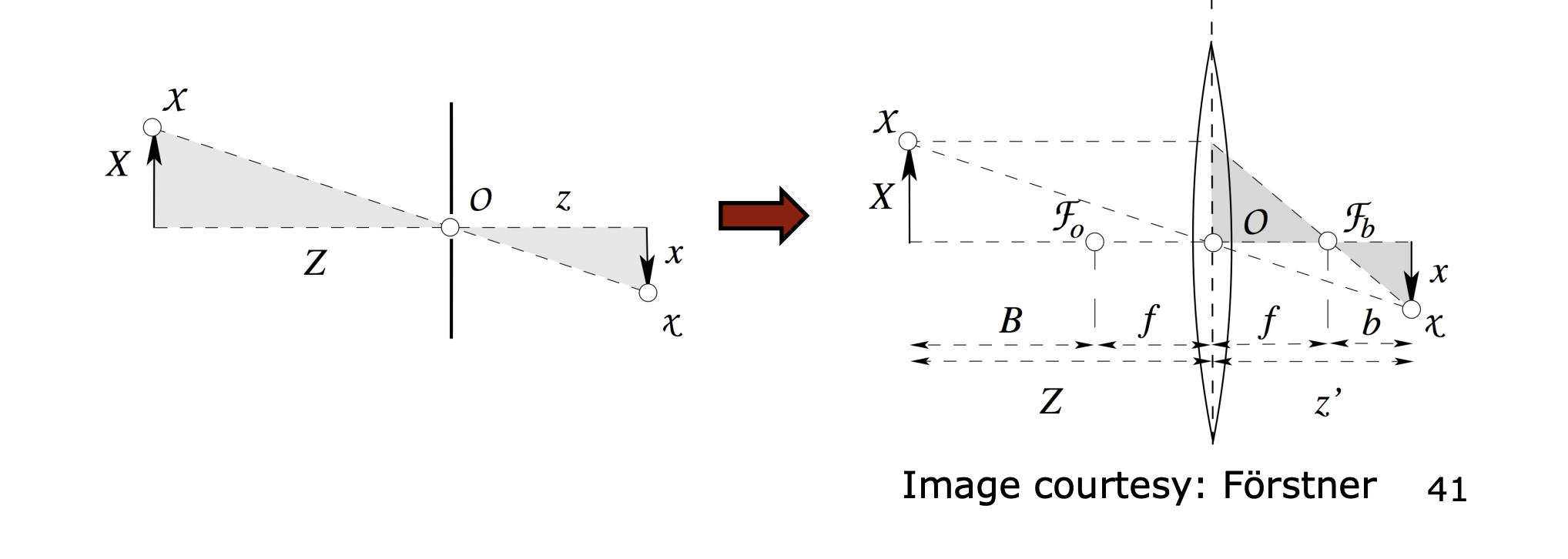

Lens Approximates the Pinhole

- The corresponding point on the object and in the image and the center of the lens should lie on one line

- The further away a beam passes the center of the lens, the larger the error

- Use of an aperture to limit the error (trade off between the usable light and price of the lens)

Three Assumptions Made in the Pinhole Camera/Thin Lens

- All rays from the object point intersect in a single point

- All image points lie on a plane

- The ray from the object point to the image point is a straight line

Aperture

Light Optics

A photon is an elementary particle. It is the “quantum of light”.

Energy of a photon is

- where is the Planck constant

Quantum optics can model the interaction of light and matter

- Every sensor element of a camera chip turns photons into electric charge

- Intensity is proportional to the number of photons reaching the sensor (pixel)

Intensity values

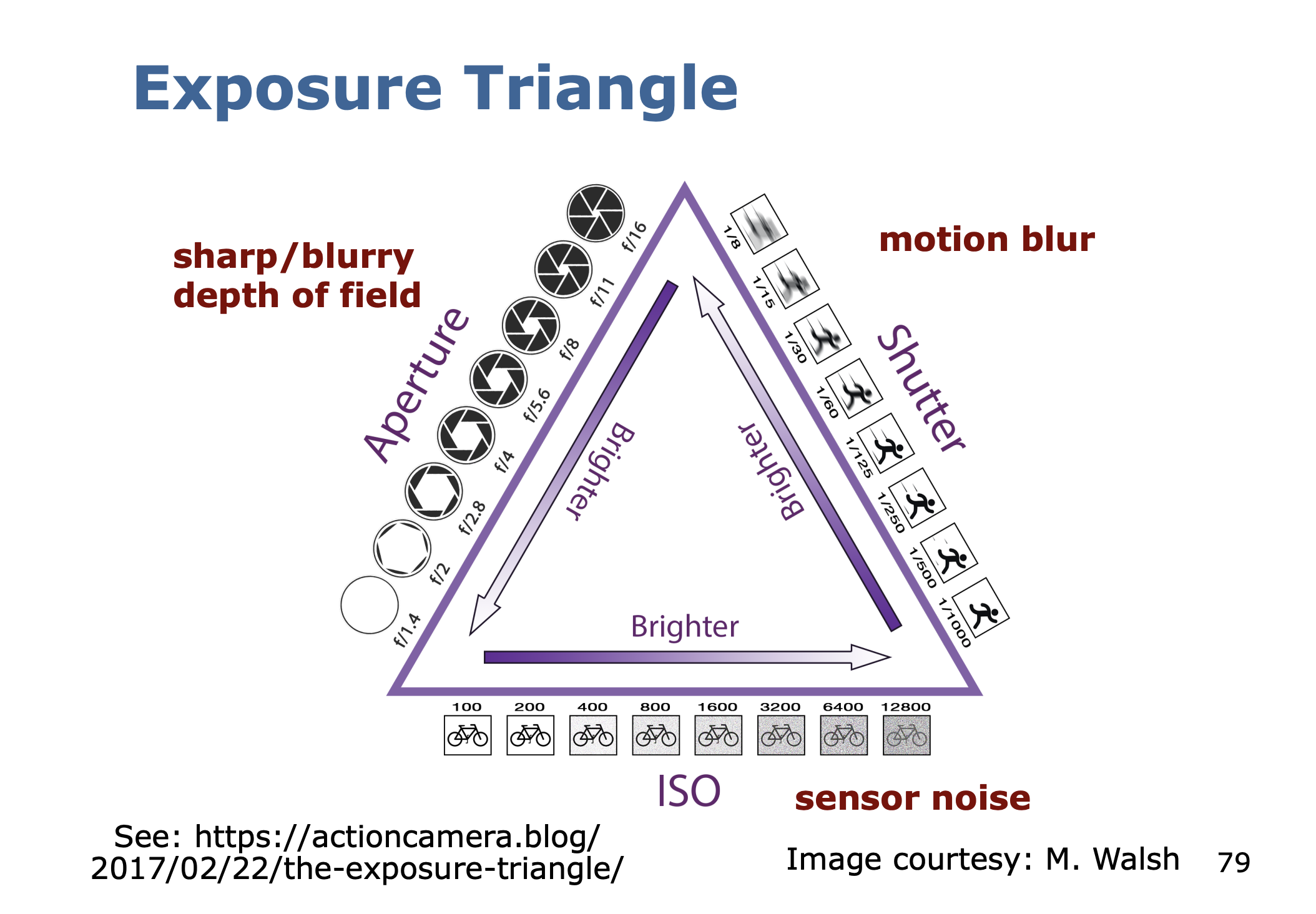

Camera

- Exposure time (“Tv”)

- Aperture/pinhole size (“Av”)

- Sensitivity of the chip (“ISO”)

ISO