Harris Corner

Learning from Cyrill Stachniss.

Resources

- Learned from the Visual Feature lecture: https://www.youtube.com/watch?v=nGya59Je4Bs&list=PLgnQpQtFTOGRYjqjdZxTEQPZuFHQa7O7Y&index=14&ab_channel=CyrillStachniss

- slides here

- Wikipedia also has solid formulas: https://en.wikipedia.org/wiki/Harris_corner_detector

- Slides from CMU https://www.cs.cmu.edu/~16385/s17/Slides/6.2_Harris_Corner_Detector.pdf

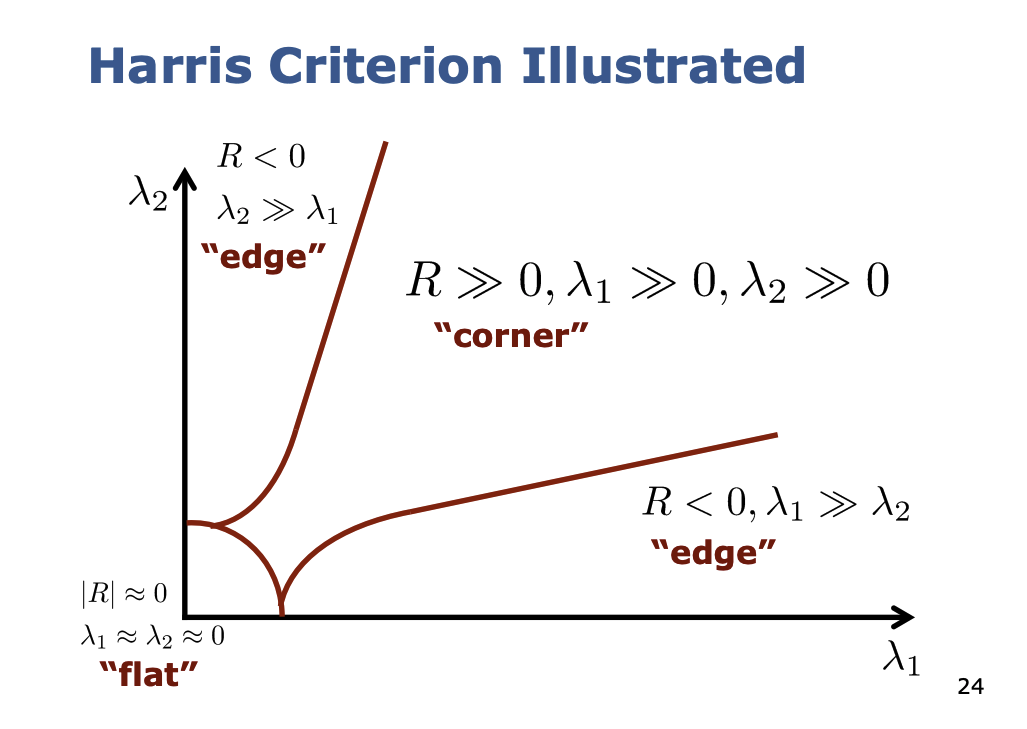

Criterion:

- is the Structure Matrix

- and are the two eigenvalues of the structure matrix

So in practice, we write it as a system of equations and solve for and

Solving the equation

Cyrill Stachniss seems to approach this in a different way? I just reasoned this out, also getting the help of ChatGPT.

The eigenvalues of a matrix are found by solving the characteristic equation , where is the identity matrix and represents the eigenvalues.

For the 2x2 Structure Matrix of the Harris Corner Detector,

Rewriting the matrix in simpler terms the Characteristic Equation is: Simplifying, we get: Solving this equation gives us the solution to the Eigenvalues.

Notice that

So we can simply the equation to

- Plug the above in the Quadratic Formula and you will find the 2 eigenvalues

Are there always 2 eigenvalues?

No, a 2x2 matrix can have either one or two distinct eigenvalues. If the matrix is diagonalizable, it will typically have two distinct eigenvalues. However, it may have only one eigenvalue if it is a defective matrix.

So how is this threshold calculated? I have 2 different eigenvalues, how do I know if ?

In practice

How fast / slow does this algorithm run?

?