Image Classification

Image Classification problem: Assign a label (from a fixed set of categories) to an input image.

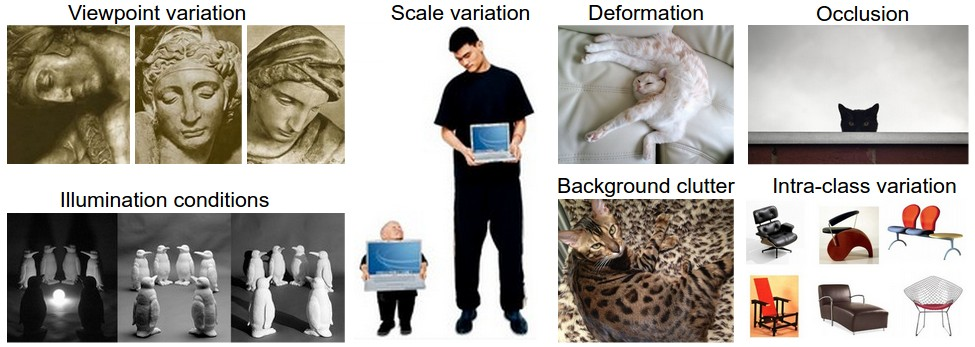

The image is just a 3D tensor of integers — for CIFAR-10, a array of pixels in . There is a semantic gap between this raw matrix of numbers and the label “cat” that a human assigns. Hard-coding rules (“cats have pointy ears, two eyes…”) is hopeless because of:

- Viewpoint variation (every pixel changes when the camera moves)

- Illumination

- Deformation (cats fold into arbitrary shapes)

- Occlusion

- Background clutter (object blending with environment)

- Intra-class variation (ex: chair)

The Data-Driven Approach

Instead of hard-coding rules, collect a dataset of images + labels, train a classifier, evaluate on held-out test images. This is the API:

def train(images, labels):

# build a model

return model

def predict(model, test_images):

# use model to predict labels

return test_labelsStandard benchmark datasets in the lecture: MNIST (10 digits, ), CIFAR-10 / CIFAR-100, ImageNet (1000 classes, ~1.4M images), Places365, Omniglot.

Related

Source

CS231n Lec 2 slides 4–19 (data-driven approach, semantic gap, datasets).