Coordinate Frame

A coordinate frame is set of orthogonal axes attached to a body that serves to describe position of points relative to that body.

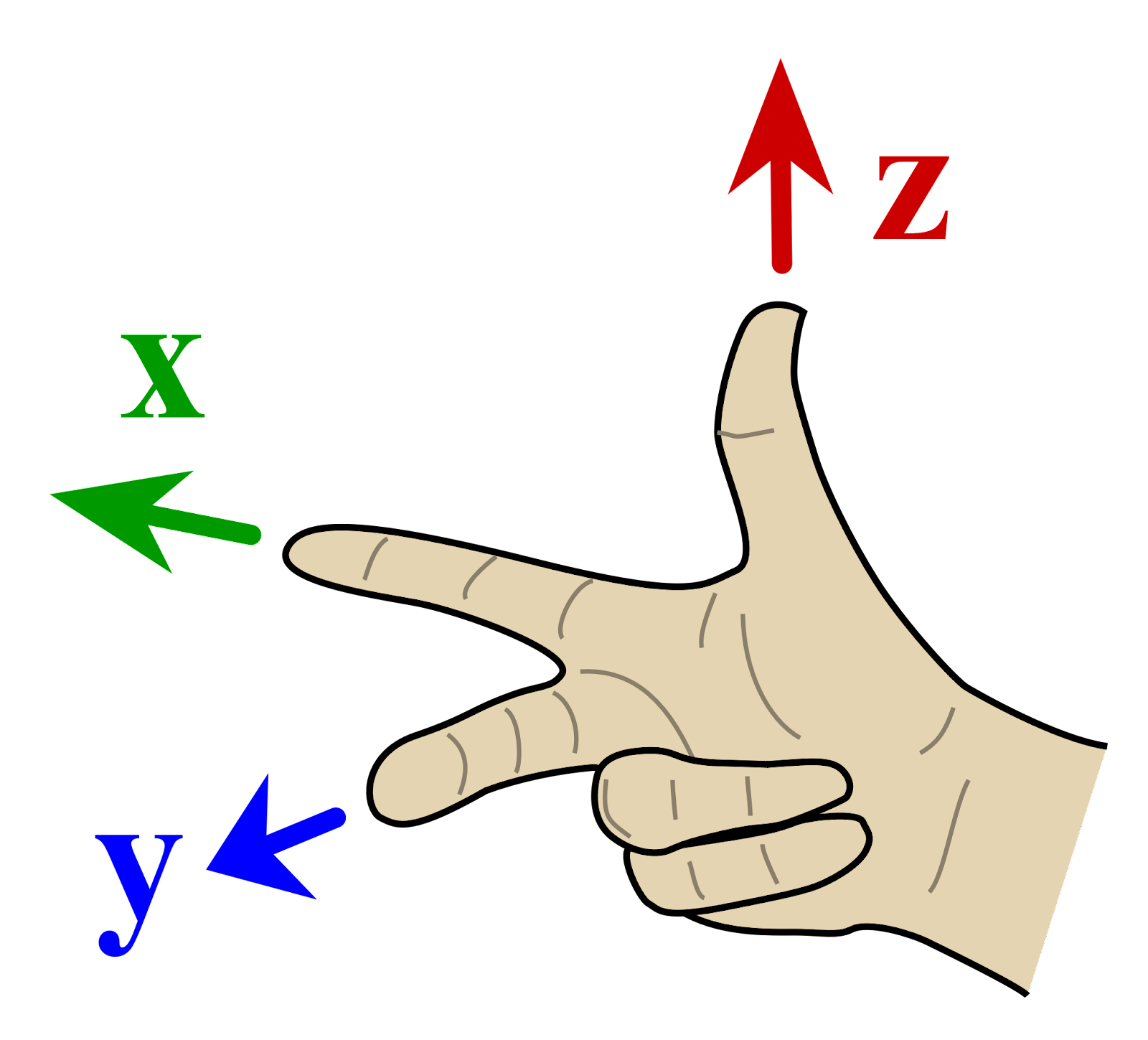

In robotics, we use right-handed coordinate systems.

Robot (Canonical) Frame

From REP103, In relation to a body the standard is:

- x forward, y left, and z up

Optical Frame

In constrast, for the Optical Frame, we have

- x right, y down, z forward

This is because an image is drawn on the

x-yplane.

Reference Frame Conventions:

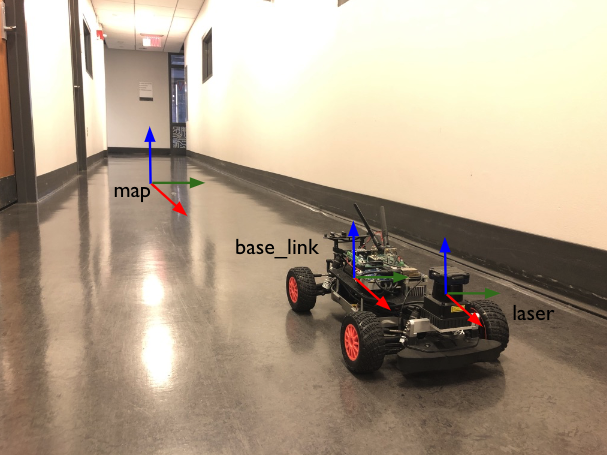

In many robotics problems, the first step is to assign a coordinate frame to all objects of interest.

Why can't we just use a single coordinate frame (i.e.

map)?Whether you like it or not, the car is equipped with a LiDAR, and obstacles are measured from the reference frame of

laser, NOT from the reference frame of themap.You must convert measurements from one frame to another by doing Transformations. In ROS, this is using the tf2 library.

Coordinate frames thus to describe the origin of some measurement. The coordinate doesn’t mean anything, unless you tell me it is in relation to what coordinate frame (ex:

map).

The ROS foundation lists out the REP 105 standards for the reference frames used by the robot:

base_link: rigidly attached to the mobile robot basemap: World fixed frameodom: Also a world-fixed frame. See Odometryearth(designed to allow multiple robots interact in differentmapframes)

Use odom for local sensing (like velocity calculations), and map for global localization estimates.

- “The

odomframe is useful as an accurate, short-term local reference, but drift makes it a poor frame for long-term reference.” - “The

mapframe is useful as a long-term global reference, but discrete jumps in position estimators make it a poor reference frame for local sensing and acting.""

The orientation of this coordinate frame is X-forward, Y-left, Z-up (REP 103 - Standard Units of Measure and Coordinate Conventions)

odomis the origin of the global axis, and it is fixed frame on the ground.base_linkis the local axis, it is moving frame and fixed on the robot.

What coordinate system?

Most 3D libraries use righthanded coordinates (such as OpenGL, 3DS Max, etc.), and some libraries use lefthanded coordinates (such as Unity, Direct3D, etc).

https://answers.ros.org/question/359697/which-frame-is-better-odom-or-base/